Assessing uncertainty of sequence representations generated by protein language models

Assessing uncertainty of sequence representations generated by protein language models

Language model-inferred embeddings are replacing structure-derived descriptions of proteins, genes and genomes. We propose a model-agnostic measure to quantify reliability of these new representations.

This is a preview of subscription content, access via your institution

Access options

Access Nature and 54 other Nature Portfolio journals

Get Nature+, our best-value online-access subscription

$32.99 / 30 days

cancel any time

Subscribe to this journal

Receive 12 print issues and online access

$259.00 per year

only $21.58 per issue

Buy this article

- Purchase on SpringerLink

- Instant access to the full article PDF.

USD 39.95

Prices may be subject to local taxes which are calculated during checkout

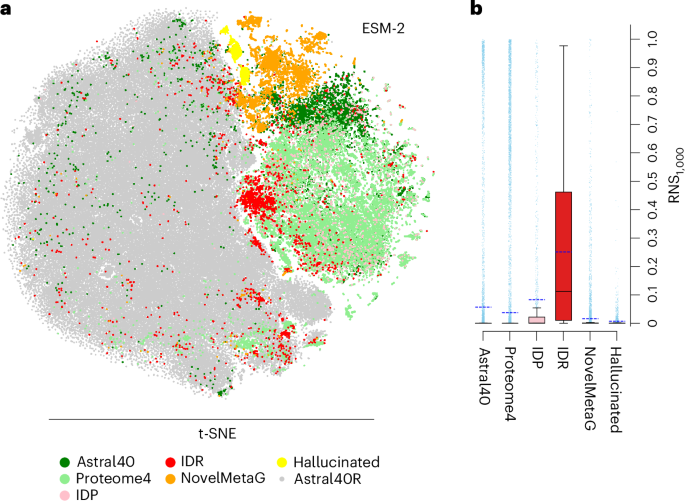

Fig. 1: RNS-based assessments of embeddings identify poorly represented proteins across different data sets.

References

- Vaswani, A. et al. Attention is all you need. Adv. Neural Inf. Process. Syst. 30 (2017). This work introduces the attention mechanism in transformer architecture.

- Weissenow, K. & Rost, B. Are protein language models the new universal key? Curr. Opin. Struct. Biol. 91, 102997 (2025). This review article discusses the transition from evolutionary information to machine-learned embeddings for protein prediction.

Article CAS PubMed

Google Scholar

- Dallago, C. et al. Learned embeddings from deep learning to visualize and predict protein sets. Curr. Protoc. 1, e113 (2021). This article introduces ‘Bioembeddings’, a publicly available library of pLM pipelines.

Article PubMed

Google Scholar

- Saul, B. N. & Christian, D. W. A general method applicable to the search for similarities in the amino acid sequence of two proteins. J. Mol. Biol. 48, 443–453 (1970). The earliest work we have identified that illustrates use of random sequences to evaluate significance of protein sequence similarities.

Article

Google Scholar

Download references

Additional information

Publisher’s note Springer Nature remains neutral with regard to jurisdictional claims in published maps and institutional affiliations.

This is a summary of: Prabakaran, R. & Yana Bromberg, Y. Quantifying uncertainty in protein representations across models and tasks. Nat. Methods https://doi.org/10.1038/s41592-026-03028-7 (2026).

About this article

Cite this article

Assessing uncertainty of sequence representations generated by protein language models. Nat Methods (2026). https://doi.org/10.1038/s41592-026-03027-8

Download citation

- Published: 01 April 2026

- Version of record: 01 April 2026

- DOI: https://doi.org/10.1038/s41592-026-03027-8

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modellanguage model

The Fallback That Never Fires

<p>Your agent hits a rate limit. The fallback logic kicks in, picks an alternative model. Everything should be fine.</p> <p>Except the request still goes to the original model. And gets rate-limited again. And again. Forever.</p> <h2> The Setup </h2> <p>When your primary model returns 429:</p> <ol> <li>Fallback logic detects rate_limit_error</li> <li>Selects next model in the fallback chain</li> <li>Retries with the fallback model</li> <li>User never notices</li> </ol> <p>OpenClaw has had model fallback chains for months, and they generally work well.</p> <h2> The Override </h2> <p><a href="https://github.com/openclaw/openclaw/issues/59213" rel="noopener noreferrer">Issue #59213</a> exposes a subtle timing problem. Between steps 2 and 3, there is another system: <strong>session model recon

I Asked AI to Do Agile Sprint Planning (GitHub Copilot Test)

<p>AI tools are getting very good at writing code.</p> <p>GitHub Copilot can generate entire functions, review pull requests, and even help refactor legacy codebases. But software development isn’t just about writing code.</p> <p>A big part of the process is <strong>planning the work</strong>.</p> <p>So I decided to run a small experiment:</p> <p><strong>Can AI actually perform Agile sprint planning?</strong></p> <p>Using <strong>GitHub Copilot inside Visual Studio 2026</strong>, I asked AI to review a legacy codebase and generate a <strong>Scrum sprint plan for rewriting the application</strong>.</p> <p>The results were… interesting.</p> <h1> Watch Video </h1> <h2> <iframe src="https://www.youtube.com/embed/ErwuATHHXw4"> </iframe> </h2> <h1> The Setup </h1> <p>The experiment was intention

OpenSpec (Spec-Driven Development) Failed My Experiment — Instructions.md Was Simpler and Faster

<p>There’s a lot of discussion right now about how developers should work with AI coding tools.</p> <p>Over the past year we’ve seen the rise of two very different philosophies:</p> <p><strong>1. Vibe Coding</strong> — just prompt the AI and iterate quickly<br> <strong>2. Spec-Driven Development</strong> — enforce structure so AI understands requirements</p> <p>Frameworks like <strong>OpenSpec</strong> are trying to formalize the second approach.</p> <p>Instead of giving AI simple prompts, the workflow looks something like this:</p> <ul> <li>generate a proposal</li> <li>review specifications</li> <li>approve tasks</li> <li>allow the AI agent to execute the plan</li> </ul> <p>In theory, this should produce <strong>better and more reliable code</strong>.</p> <p>So I decided to test it on a r

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Models

Walmart expands AI-powered shopping — and checkout — with Google Gemini - axios.com

<a href="https://news.google.com/rss/articles/CBMidEFVX3lxTFBrSFVRNTBJMlZoWVRGNEdJNlpBbUl3RVpPRVlIVWQ0OFhtck5zSzdIRkt1bDQ5aDJTZ1g3SVVDZnNfSm1Nbk1DdGRyc2RqQWJnNkRZYjJlWEU5SGdKUjU3ZkdDekt6bHgxT3dwUEJFMU5URHFy?oc=5" target="_blank">Walmart expands AI-powered shopping — and checkout — with Google Gemini</a> <font color="#6f6f6f">axios.com</font>

Walmart teams up with Google’s Gemini for AI-assisted shopping - Retail Dive

<a href="https://news.google.com/rss/articles/CBMiigFBVV95cUxOX3g3TkoxOTZieXhpOWd2ZnBTNnM2Rl9rZTJ1WmlzMVZhUFlmVWlpWmVyOTZJUV9WcHIyR1VaeGxaQzZDYW1BeDRWbGVIWGx6UWpEdUJ4LXpoZk1YUDNHcnlJNTFKOWxCOXJDNm13V1NnNmFJRjFiM2FKUnp1VkdobmVTZ1NpN2ZEV2c?oc=5" target="_blank">Walmart teams up with Google’s Gemini for AI-assisted shopping</a> <font color="#6f6f6f">Retail Dive</font>

Google’s Gemini AI is getting a bigger role across Docs, Sheets, and Slides - The Verge

<a href="https://news.google.com/rss/articles/CBMiiAFBVV95cUxPMHdiN2dqSUwyNDlzaVRCU1RUSW1iYnZZdmgxVXJtUm9JR2pqbE5LQ3V3eWRZV3htREYwNDMwaThfYVd2RjhhQUZqZWRtVHd3aFhuOFRZMDNRbGQwUmFMTm0wckpLMThLTlZyU2RlX1ZfaGI2WThSMVEtLU9qZXlPSS11dzREUnBv?oc=5" target="_blank">Google’s Gemini AI is getting a bigger role across Docs, Sheets, and Slides</a> <font color="#6f6f6f">The Verge</font>

The Fallback That Never Fires

<p>Your agent hits a rate limit. The fallback logic kicks in, picks an alternative model. Everything should be fine.</p> <p>Except the request still goes to the original model. And gets rate-limited again. And again. Forever.</p> <h2> The Setup </h2> <p>When your primary model returns 429:</p> <ol> <li>Fallback logic detects rate_limit_error</li> <li>Selects next model in the fallback chain</li> <li>Retries with the fallback model</li> <li>User never notices</li> </ol> <p>OpenClaw has had model fallback chains for months, and they generally work well.</p> <h2> The Override </h2> <p><a href="https://github.com/openclaw/openclaw/issues/59213" rel="noopener noreferrer">Issue #59213</a> exposes a subtle timing problem. Between steps 2 and 3, there is another system: <strong>session model recon

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!