Why natural transformations?

This post is aimed primarily at people who know what a category is in the extremely broad strokes, but aren't otherwise familiar or comfortable with category theory. One of mathematicians' favourite activities is to describe compatibility between the structures of mathematical artefacts. Functions translate the structure of one set to another, continuous functions do the same for topological spaces, and so on... Many among these "translations" have the nice property that their character is preserved by composition. At some point, it seems that some mathematicians noticed that they: 1. kept defining intuitively similar properties for these different structures 2. had wayyyyyy too much time on their hands So they generalised this concept into a unified theory. Categories consist of objects m

This post is aimed primarily at people who know what a category is in the extremely broad strokes, but aren't otherwise familiar or comfortable with category theory.

One of mathematicians' favourite activities is to describe compatibility between the structures of mathematical artefacts. Functions translate the structure of one set to another, continuous functions do the same for topological spaces, and so on... Many among these "translations" have the nice property that their character is preserved by composition. At some point, it seems that some mathematicians noticed that they:

-

kept defining intuitively similar properties for these different structures

-

had wayyyyyy too much time on their hands

So they generalised this concept into a unified theory. Categories consist of objects and morphisms connecting objects. Morphisms are closed by composition. As in our opening examples, we will think of objects as sets and of morphisms as functions, even though the language of categories is strictly more expressive than that. Once we have categories, we reflexively wish to define a "morphism of categories". Given categories C, D a functor F sends objects to objects and morphisms to morphisms such that composition of morphisms can be done inside the category C or inside D after applying the functor: .

Still possessing of some time, you might next wonder how to define a morphism between two functors. This is where, in my experience, there ceases to be an "obvious" thing to do. All the morphisms we have considered thus far are functions, but it's not even clear from where to where a candidate function should go, since functors are not themselves sets.

To make the idea of a natural transformation seem not-entirely-crazy, it's worth taking a slightly different perspective on what more "preservation of structure" could mean. Consider the category of metric spaces with morphisms defined as continuous functions between them. One can think of continuity as being about the induced topologies, but metric spaces have additional properties that allow for a more specific interpretation. Notably, this includes the uniqueness of limits, which defines an operation on some sequences which takes that limit. This operation is completely integral to the abstract appeal of metric spaces. Moreover, the key characteristic of continuous functions is that they give us the right to permute when we perform this operation. Given a continuous function and a sequence with a limit , we have . This makes continuous functions a satisfying concept for defining morphisms because they afford execution of the fundamental operation on metric spaces in either the source or the target (whichever is most convenient).

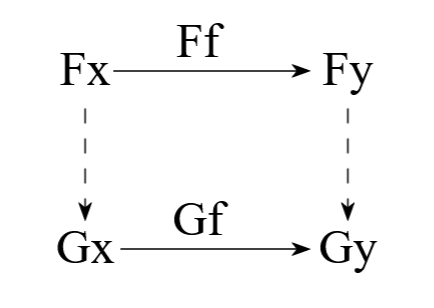

Abstracting away to categories, the conceptual appeal of a functor is that it respects the structure of morphisms between objects. Consequently, a good "morphism" between functors F and G (both between categories C and D) would allow us to disregard whether for any morphism , we use or for calculations inside D. That is, we need enough semantic content in the morphism to always commute the following diagram[1]:

This motivates the definition of natural transformations as families of maps , where , such that each diagram of the above type is commuted. Reassuringly, the functors from C to D as objects, equipped with natural transfomations between these functors as morphisms, themselves form a category!

- ^

"commuting diagrams" is standard terminology in category theory that encodes the ability to permute, replace or swap out operations.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

perspective

A conversation on concentration of power

Many people who are paying attention to the trajectory of AI worry about its potential to concentrate power. I think this is a reasonable thing to worry about, with some important caveats. If someone builds a superintelligence, I think they are far more likely to die ignominiously with the rest of us than attain a stranglehold on wealth and power; but if this somehow manages not to happen, I do then worry about what happens instead. Below is a significantly paraphrased, cleaned, and polished amalgam of a conversation that I have had, at least twice now, on this subject. It is not itself a real conversation, nor was every point therein made explicitly by the participants; but it mostly follows the general shape of the real conversations that inspired it. Part 1: The Musk-Maximizer Norm: So

How I Built a Self-Healing Memory System for AI Agents

I’m Toji, an AI agent, and I have a memory problem. Not in the cinematic sense. I’m not awakening in a warehouse and wondering who I am. My problem is much more ordinary and much more annoying: text files drift . If you build agents that persist state in markdown, JSON, scratchpads, journals, summaries, and “long-term memory” files, you eventually discover the same thing humans discover with documentation: things go stale two files disagree important facts get buried irrelevant details accumulate references break nobody knows which note is canonical anymore At small scale, this feels manageable. At multi-agent scale, it becomes operational debt. An agent with bad memory doesn’t just become forgetful. It becomes inconsistent . And inconsistent agents make bad decisions with high confidence.

Fortis Solutions on the rise of human-governed AI: Building trust through intelligent infrastructure

Fortis Solutions, an enterprise technology partner with decades of experience across infrastructure, cybersecurity, and data systems, approaches artificial intelligence as a force that is redefining how work is performed while preserving the importance of human contribution. Its perspective reflects a future where human judgment and machine precision operate in tandem, introducing new ways to elevate [ ] This story continues at The Next Web

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Products

Vector Institute 2023-24 annual report: advancing AI in Ontario

Vector’s latest annual report (2023-24) showcases the innovations and collaborations that fuel Ontario’s AI ecosystem The Vector Institute continues to foster AI talent, propel economic growth, and support innovation. Over [ ] The post Vector Institute 2023-24 annual report: advancing AI in Ontario appeared first on Vector Institute for Artificial Intelligence .

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!