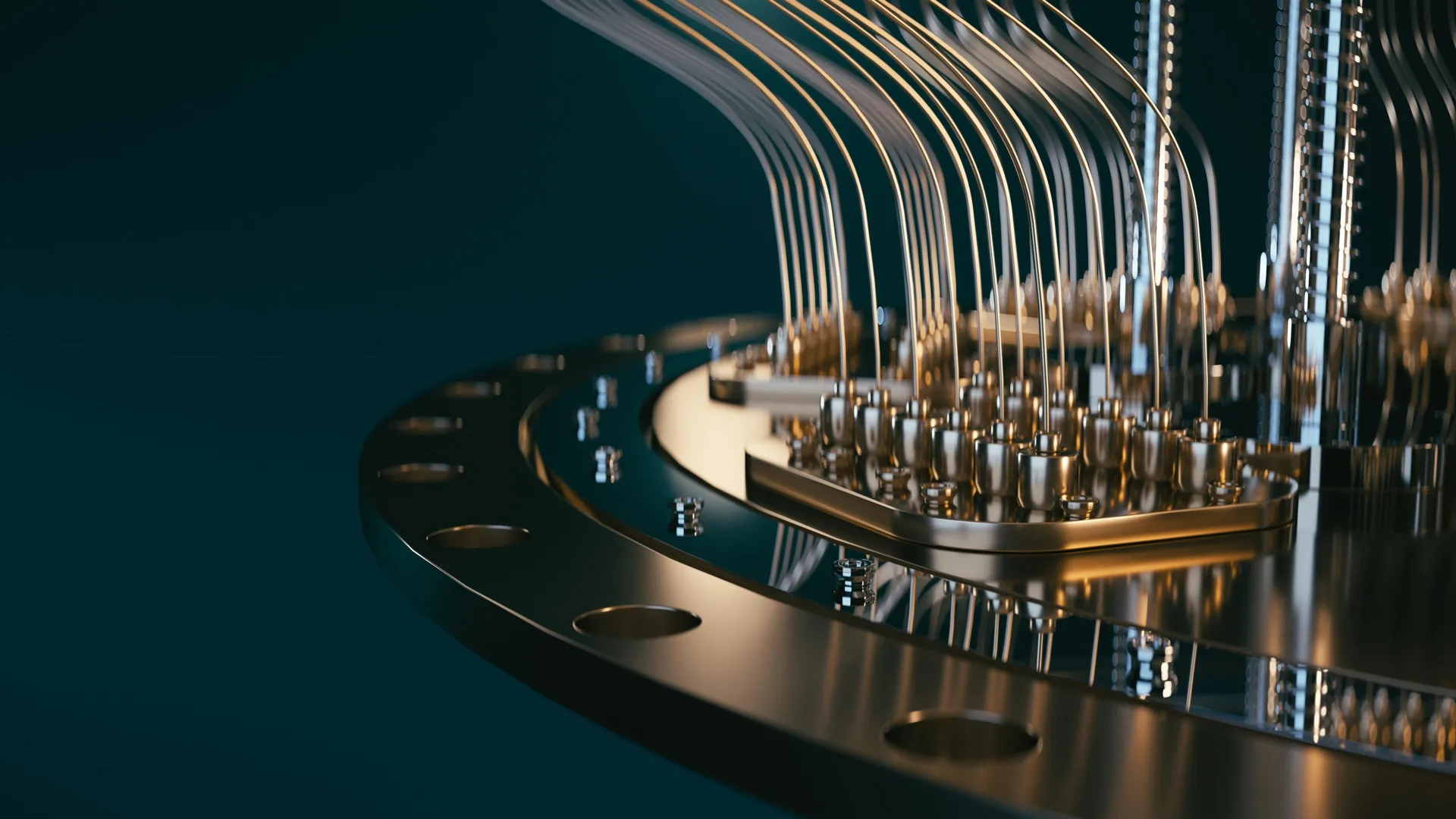

Quantum computer breakthrough tracks qubit fluctuations in real time

Qubits, the heart of quantum computers, can change performance in fractions of a second — but until now, scientists couldn’t see it happening. Researchers at NBI have built a real-time monitoring system that tracks these rapid fluctuations about 100 times faster than previous methods. Using fast FPGA-based control hardware, they can instantly identify when a qubit shifts from “good” to “bad.” The discovery opens a new path toward stabilizing and scaling future quantum processors.

Researchers at the Niels Bohr Institute have significantly increased how quickly changes in delicate quantum states can be detected inside a qubit. By combining commercially available hardware with new adaptive measurement techniques, the team can now observe rapid shifts in qubit behavior that were previously impossible to see.

Qubits are the fundamental units of quantum computers, which scientists hope will one day outperform today's most powerful machines. But qubits are extremely sensitive. The materials used to build them often contain tiny defects that scientists still do not fully understand. These microscopic imperfections can shift position hundreds of times per second. As they move, they alter how quickly a qubit loses energy and with it valuable quantum information.

Until recently, standard testing methods took up to a minute to measure qubit performance. That was far too slow to capture these rapid fluctuations. Instead, researchers could only determine an average energy loss rate, masking the true and often unstable behavior of the qubit.

It is somewhat like asking a strong workhorse to pull a plow while obstacles constantly appear in its path faster than anyone can react. The animal may be capable, but unpredictable disruptions make the job much harder.

FPGA Powered Real Time Qubit Control

A research team from the Niels Bohr Institute's Center for Quantum Devices and the Novo Nordisk Foundation Quantum Computing Programme, led by postdoctoral researcher Dr. Fabrizio Berritta, developed a real time adaptive measurement system that tracks changes in the qubit energy loss (relaxation) rate as they occur. The project involved collaboration with scientists from the Norwegian University of Science and Technology, Leiden University, and Chalmers University.

The new approach relies on a fast classical controller that updates its estimate of a qubit's relaxation rate within milliseconds. This matches the natural speed of the fluctuations themselves, rather than lagging seconds or minutes behind as older methods did.

To achieve this, the team used a Field Programmable Gate Array (FPGA), a type of classical processor designed for extremely rapid operations. By running the experiment directly on the FPGA, they could quickly generate a "best guess" of how fast the qubit was losing energy using only a few measurements. This eliminated the need for slower data transfers to a conventional computer.

Programming FPGAs for such specialized tasks can be challenging. Even so, the researchers succeeded in updating the controller's internal Bayesian model after every single qubit measurement. That allowed the system to continually refine its understanding of the qubit's condition in real time.

As a result, the controller now keeps pace with the qubit's changing environment. Measurements and adjustments happen on nearly the same timescale as the fluctuations themselves, making the system roughly one hundred times faster than previously demonstrated.

The work also revealed something new. Scientists did not previously know just how quickly fluctuations occur in superconducting qubits. These experiments have now provided that insight.

Commercial Quantum Hardware Meets Advanced Control

FPGAs have long been used in other scientific and engineering fields. In this case, the researchers used a commercially available FPGA based controller from Quantum Machines called the OPX1000. The system can be programmed in a language similar to Python, which many physicists already use, making it more accessible to research groups worldwide.

The integration of this controller with advanced quantum hardware was made possible through close collaboration between the Niels Bohr Institute research group led by Associate Professor Morten Kjaergaard and Chalmers University, where the quantum processing unit was designed and fabricated. "The controller enables very tight integration between logic, measurements and feedforward: these components made our experiment possible," says Morten Kjærgaard.

Why Real Time Calibration Matters for Quantum Computers

Quantum technologies promise powerful new capabilities, though practical large scale quantum computers are still under development. Progress often comes incrementally, but occasionally major steps forward occur.

By uncovering these previously hidden dynamics, the findings reshape how scientists think about testing and calibrating superconducting quantum processors. With current materials and manufacturing methods, moving toward real time monitoring and adjustment appears essential for improving reliability. The results also highlight the importance of partnerships between academic research and industry, along with creative uses of available technology.

"Nowadays, in quantum processing units in general, the overall performance is not determined by the best qubits, but by the worst ones: those are the ones we need to focus on. The surprise from our work is that a 'good' qubit can turn into a 'bad' one in fractions of a second, rather than minutes or hours.

"With our algorithm, the fast control hardware can pinpoint which qubit is 'good' or 'bad' basically in real time. We can also gather useful statistics on the 'bad qubits in seconds instead of hours or days.

"We still cannot explain a large fraction of the fluctuations we observe. Understanding and controlling the physics behind such fluctuations in qubit properties will be necessary for scaling quantum processors to a useful size," Fabrizio says.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

research

Akira Hackers Shrink Encryption Timeline to Under One Hour

A notorious ransomware group has been observed leveraging long‑standing exploits and stolen credentials to slip past MFA protections and execute attacks in as little as one hour. Tracking the well-known Akira ransomware group, security researchers from Halcyon witnessed hackers abusing CVE-2024-40766 to gain unauthorised access to SonicWall management interfaces and configuration backups on unpatched devices. [ ] The post Akira Hackers Shrink Encryption Timeline to Under One Hour appeared first on DIGIT .

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Releases

This new phone from a little-known Chinese manufacturer has Ferrari-like styling

Amid the usual barrage of new launches around this time of year from the likes of Samsung and Xiaomi, I’ve been checking out the highest-end device yet from a manufacturer many readers won’t have heard of—but it’s one that marks an unusual collaboration with another brand that might be more familiar. Infinix is a sub-brand of Chinese company Transsion, which also owns the smartphone maker Tecno. The manufacturer is particularly successful in developing smartphone markets like Africa and the Middle East; across all of its brands, Transsion accounts for about half of Africa’s smartphone market share, according to figures from Canalys last year. Infinix largely targets young consumers in the markets where it operates. Its ultra-popular Hot series is designed to be affordable and stylish, whil

Obsolescence without hostility: optimization, uniformity, and the erosion of human meaning in a post-AI world

Most contemporary discussions of artificial intelligence focus on misalignment, loss of control, or catastrophic harm. This paper examines a different and comparatively neglected possibility: that advanced AI may erode the social conditions under which human meaning has historically been generated, without conflict, coercion, or displacement. The central question is not whether AI dominates humanity, but whether human participation remains causally significant once AI systems outperform humans across core instrumental domains. The argument is conditional and long-horizon in scope. It proceeds from the observation that existing limits on AI superiority are primarily technological and economic rather than principled. If these constraints are progressively overcome, and AI systems come to out

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!