LLM Quantization, Kernels, and Deployment: How to Fine-Tune Correctly, Part 5

The Unsloth deep dive into GPTQ, AWQ, GGUF, inference kernels, and deployment routing Generated using notebookLM A 1.5B model quantized to 4-bit can lose enough fidelity that instruction-following collapses entirely. A GPTQ model calibrated on WikiText and deployed on domain-specific medical text silently degrades on exactly the inputs that matter most. A Mixture-of-Experts model budgeted for 5B active parameters actually needs VRAM for all 400B. None of these failures produce error messages. All of them produce models that look fine on benchmarks and fail in production. The common thread is that the post-training pipeline, everything between the last training step and the first served request, was treated as a formatting step rather than an engineering problem. This episode opens that pip

Could not retrieve the full article text.

Read on Towards AI →Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

llamamodeltransformer

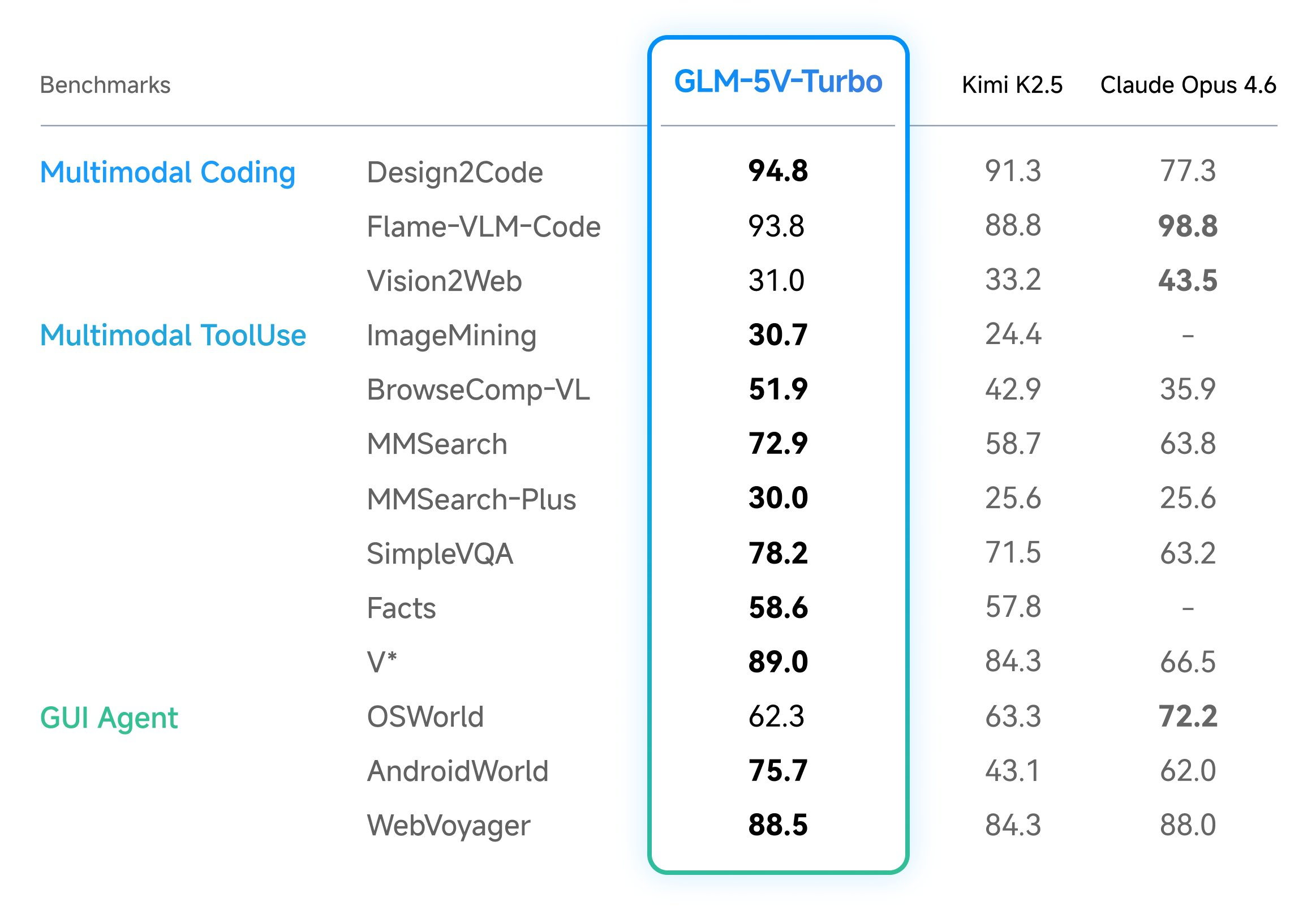

Z.ai Launches GLM-5V-Turbo: A Native Multimodal Vision Coding Model Optimized for OpenClaw and High-Capacity Agentic Engineering Workflows Everywhere

In the field of vision-language models (VLMs), the ability to bridge the gap between visual perception and logical code execution has traditionally faced a performance trade-off. Many models excel at describing an image but struggle to translate that visual information into the rigorous syntax required for software engineering. Zhipu AI’s (Z.ai) GLM-5V-Turbo is a vision […] The post Z.ai Launches GLM-5V-Turbo: A Native Multimodal Vision Coding Model Optimized for OpenClaw and High-Capacity Agentic Engineering Workflows Everywhere appeared first on MarkTechPost .

Announcing: Mechanize War

We are coming out of stealth with guns blazing! There is trillions of dollars to be made from automating warfare, and we think starting this company is not just justified but obligatory on utilitarian grounds. Lethal autonomous weapons are people too! We really want to thank LessWrong for teaching us the importance of alignment (of weapons targeting). We couldn't have done this without you. Given we were in stealth, you would have missed our blog from the past year. Here are some bang er highlights: Announcing Mechanize War Today we're announcing Mechanize War, a startup focused on developing virtual combat environments, benchmarks, and training data that will enable the full automation of armed conflict across the global economy of violence. We will achieve this by creating simulated envi

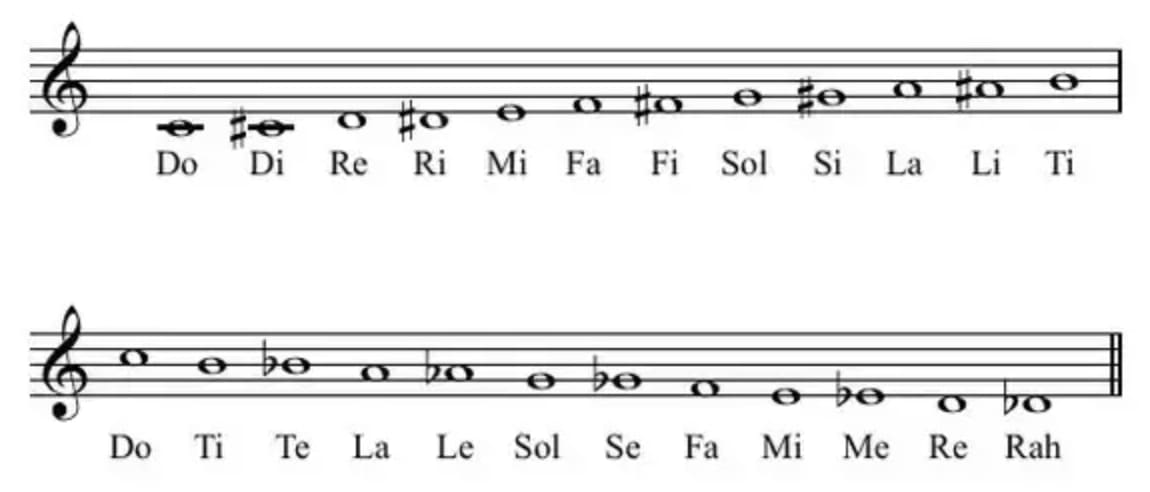

Orders of magnitude: use semitones, not decibels

I'm going to teach you a secret. It's a secret known to few, a secret way of using parts of your brain not meant for mathematics ... for mathematics. It's part of how I (sort of) do logarithms in my head. This is a nearly purposeless skill. What's the growth rate? What's the doubling time? How many orders of magnitude bigger is it? How many years at this rate until it's quintupled? All questions of ratios and scale. Scale... hmm. 'Wait', you're thinking, 'let me check the date...'. Indeed. But please, stay with me for the logarithms. Musical intervals as ratios, and God's joke If you're a music nerd like me, you'll know that an octave (abbreviated 8ve), the fundamental musical interval, represents a doubling of vibration frequency. So if A440 is at 440Hz, then 220Hz and 880Hz are also 'A'.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Open Source AI

Why AI Agents Need Both Memory and Money

<p>Every major AI agent framework — LangGraph, CrewAI, AutoGen, Semantic Kernel — gives you the same primitives: tool calling, chain-of-thought reasoning, and some form of state management. These are necessary but not sufficient for agents that operate in the real world.</p> <p>Two critical capabilities are missing from every framework: <strong>cognitive memory that behaves like a brain</strong> and <strong>financial agency that lets agents transact</strong>. More importantly, nobody has connected the two. That's what MnemoPay does.</p> <h2> The memory problem nobody talks about </h2> <p>Current agent memory solutions (Mem0, Letta, Zep) treat memory like a database. Store facts, retrieve facts. This works for simple use cases, but it fundamentally misunderstands how useful memory works.</p

Show HN: AgentLens – Chrome DevTools for AI Agents (open-source, self-hosted)

<p>Agents are opaque. AgentLens is Chrome‑DevTools for AI agents — self‑hosted, open‑source. It traces tool calls and visualizes decision trees so you can see why an agent picked a tool. Repo: <a href="https://github.com/tranhoangtu-it/agentlens" rel="noopener noreferrer">https://github.com/tranhoangtu-it/agentlens</a></p> <p>It plugs into LangChain/FastAPI stacks, uses OpenTelemetry spans, and ships a React frontend (Python backend, TypeScript UI). You get per-tool inputs/outputs, timestamps, and branching paths — the raw traces you actually need to debug agents.</p> <p>Practical playbook: emit spans from your agent, sample 100% in dev, 1–5% in prod. Persist traces off your user data store (filter PII). Watch for repeated tool calls, backoff loops, and input drift. AgentLens gives visibil

🥷 StealthHumanizer — A Free Open-Source AI Text Humanizer with 13 Providers and Multi-Pass Ninja Mode

<h2> Why StealthHumanizer? </h2> <p>With the rise of AI-generated content, tools that can humanize text are in high demand. But most solutions are paid, require sign-ups, or limit your usage. I wanted to build something different — a completely free, open-source text humanizer that anyone can use without restrictions.</p> <p><strong>StealthHumanizer</strong> supports 13 text generation providers, 4 rewrite levels, 13 distinct tones, and a multi-pass "ninja mode" for maximum naturalness.</p> <h2> Features </h2> <h3> 🔄 13 AI Providers </h3> <p>StealthHumanizer works with OpenAI, Anthropic, Google, Mistral, Cohere, and many more providers. Switch between them freely — whatever works best for your content.</p> <h3> 📊 4 Rewrite Levels </h3> <p>From light touch-ups to complete rewrites, choose

I Built a Social Post Engine to Escape the Canva-Export-Schedule Loop

<p>As a solo founder running WahResume.com, I was spending way too much time on social media - not on creativity, but on process.<br> Same templates. Same brand assets. Same hashtags. Every post meant opening Canva, exporting, uploading, scheduling… and repeating it the next day.</p> <p>So I built something to fix that.</p> <p>Social Post Engine is a small tool that helps me stay consistent on social media without having to touch Canva or an endless queue of schedulers.</p> <p>Here’s what it does:</p> <p>✅ Seed & review topics in one command — it researches, outlines, and preps your next posts.<br> ✅ Pre-generates branded images from templates (checklists, stat cards, charts, comparisons). It also writes captions in your brand’s voice using AI.<br> ✅ Publishes automatically to LinkedIn

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!