🔥 MervinPraison/PraisonAI

PraisonAI 🦞 - Your 24/7 AI employee team. Automate and solve complex challenges with low-code multi-agent AI that plans, researches, codes, and delivers to Telegram, Discord, and WhatsApp. Handoffs, guardrails, memory, RAG, 100+ LLMs. — Trending on GitHub today with 107 new stars.

PraisonAI 🦞 — Automate and solve complex challenges with AI agent teams that plan, research, code, and deliver results to Telegram, Discord, and WhatsApp — running 24/7. A low-code, production-ready multi-agent framework with handoffs, guardrails, memory, RAG, and 100+ LLM providers, built around simplicity, customisation, and effective human-agent collaboration.

██████╗ ██████╗ █████╗ ██╗███████╗ ██████╗ ███╗ ██╗ █████╗ ██╗ ██╔══██╗██╔══██╗██╔══██╗██║██╔════╝██╔═══██╗████╗ ██║ ██╔══██╗██║ ██████╔╝██████╔╝███████║██║███████╗██║ ██║██╔██╗ ██║ ███████║██║ ██╔═══╝ ██╔══██╗██╔══██║██║╚════██║██║ ██║██║╚██╗██║ ██╔══██║██║ ██║ ██║ ██║██║ ██║██║███████║╚██████╔╝██║ ╚████║ ██║ ██║██║ ╚═╝ ╚═╝ ╚═╝╚═╝ ╚═╝╚═╝╚══════╝ ╚═════╝ ╚═╝ ╚═══╝ ╚═╝ ╚═╝╚═╝██████╗ ██████╗ █████╗ ██╗███████╗ ██████╗ ███╗ ██╗ █████╗ ██╗ ██╔══██╗██╔══██╗██╔══██╗██║██╔════╝██╔═══██╗████╗ ██║ ██╔══██╗██║ ██████╔╝██████╔╝███████║██║███████╗██║ ██║██╔██╗ ██║ ███████║██║ ██╔═══╝ ██╔══██╗██╔══██║██║╚════██║██║ ██║██║╚██╗██║ ██╔══██║██║ ██║ ██║ ██║██║ ██║██║███████║╚██████╔╝██║ ╚████║ ██║ ██║██║ ╚═╝ ╚═╝ ╚═╝╚═╝ ╚═╝╚═╝╚══════╝ ╚═════╝ ╚═╝ ╚═══╝ ╚═╝ ╚═╝╚═╝pip install praisonai`

- export TAVILY_API_KEY=xxxxx

Quick Paths:

🆕 New here? → Quick Start (1 minute to first agent)

📦 Installing? → Installation

🐍 Python SDK? → Python Examples

📄 YAML/No-Code? → YAML Examples

🎯 CLI user? → CLI Quick Reference

🤝 Contributing? → Contributing

⚡ Performance

PraisonAI is built for speed, with agent instantiation in under 4μs. This reduces overhead, improves responsiveness, and helps multi-agent systems scale efficiently in real-world production workloads.

Performance Metric PraisonAI

Avg Instantiation Time 3.77 μs

🎯 Use Cases

AI agents solving real-world problems across industries:

Use Case Description

🔍 Research & Analysis Conduct deep research, gather information, and generate insights from multiple sources automatically

💻 Code Generation Write, debug, and refactor code with AI agents that understand your codebase and requirements

✍️ Content Creation Generate blog posts, documentation, marketing copy, and technical writing with multi-agent teams

📊 Data Pipelines Extract, transform, and analyze data from APIs, databases, and web sources automatically

🤖 Customer Support Deploy 24/7 support bots on Telegram, Discord, Slack with memory and knowledge-backed responses

⚙️ Workflow Automation Automate multi-step business processes with agents that hand off tasks, verify results, and self-correct

Supported Providers

PraisonAI supports 100+ LLM providers through seamless integration:

View all 24 providers with examples

Provider Example

OpenAI Example

Anthropic Example

Google Gemini Example

Ollama Example

Groq Example

DeepSeek Example

xAI Grok Example

Mistral Example

Cohere Example

Perplexity Example

Fireworks Example

Together AI Example

OpenRouter Example

HuggingFace Example

Azure OpenAI Example

AWS Bedrock Example

Google Vertex Example

Databricks Example

Cloudflare Example

AI21 Example

Replicate Example

SageMaker Example

Moonshot Example

vLLM Example

🌟 Why PraisonAI?

Feature How

🔌

MCP Protocol — stdio, HTTP, WebSocket, SSE

tools=MCP("npx ...")

🧠

Planning Mode — plan → execute → reason

planning=True

🔍 Deep Research — multi-step autonomous research Docs

🤖 External Agents — orchestrate Claude Code, Gemini CLI, Codex Docs

🔄

Agent Handoffs — seamless conversation passing

handoff=True

🛡️ Guardrails — input/output validation Docs

Web Search + Fetch — native browsing

web_search=True

🪞 Self Reflection — agent reviews its own output Docs

🔀 Workflow Patterns — route, parallel, loop, repeat Docs

🧠

Memory (zero deps) — works out of the box

memory=True

View all 25 features

Feature How

💡

Prompt Caching — reduce latency + cost

prompt_caching=True

💾

Sessions + Auto-Save — persistent state across restarts

auto_save="my-project"

💭

Thinking Budgets — control reasoning depth

thinking_budget=1024

📚 RAG + Quality-Based RAG — auto quality scoring retrieval Docs

📊 Model Router — auto-routes to cheapest capable model Docs

🧊 Shadow Git Checkpoints — auto-rollback on failure Docs

📡 A2A Protocol — agent-to-agent interop Docs

📏 Context Compaction — never hit token limits Docs

📡 Telemetry — OpenTelemetry traces, spans, metrics Docs

📜 Policy Engine — declarative agent behavior control Docs

🔄 Background Tasks — fire-and-forget agents Docs

🔁 Doom Loop Detection — auto-recovery from stuck agents Docs

🕸️ Graph Memory — Neo4j-style relationship tracking Docs

🏖️ Sandbox Execution — isolated code execution Docs

🖥️ Bot Gateway — multi-agent routing across channels Docs

🚀 Quick Start

Get started with PraisonAI in under 1 minute:

# Install pip install praisonaiagents# Install pip install praisonaiagentsSet API key

export OPENAI_API_KEY=your_key_here

Create a simple agent

python -c "from praisonaiagents import Agent; Agent(instructions='You are a helpful AI assistant').start('Write a haiku about AI')"`

Next Steps: Single Agent Example | Multi Agents | Full Docs

📦 Installation

Python SDK

Lightweight package dedicated for coding:

pip install praisonaiagents

For the full framework with CLI support:

pip install praisonai

🦞 PraisonAI Claw — full UI with bots, memory, knowledge, and gateway:

pip install "praisonai[claw]" praisonai clawpip install "praisonai[claw]" praisonai claw🔗 PraisonAI Flow — Langflow Visual Flow Builder:

pip install "praisonai[flow]" praisonai flowpip install "praisonai[flow]" praisonai flow🤖 PraisonAI UI — Clean chat interface:

pip install "praisonai[ui]" praisonai uipip install "praisonai[ui]" praisonai uiJavaScript SDK

npm install praisonai

📘 Using Python Code

1. Single Agent

from praisonaiagents import Agent agent = Agent(instructions="You are a helpful AI assistant") agent.start("Write a movie script about a robot in Mars")from praisonaiagents import Agent agent = Agent(instructions="You are a helpful AI assistant") agent.start("Write a movie script about a robot in Mars")2. Multi Agents

from praisonaiagents import Agent, Agents

research_agent = Agent(instructions="Research about AI") summarise_agent = Agent(instructions="Summarise research agent's findings") agents = Agents(agents=[research_agent, summarise_agent]) agents.start()`

3. MCP (Model Context Protocol)

from praisonaiagents import Agent, MCP

stdio - Local NPX/Python servers

agent = Agent(tools=MCP("npx @modelcontextprotocol/server-memory"))

Streamable HTTP - Production servers

agent = Agent(tools=MCP("https://api.example.com/mcp"))

WebSocket - Real-time bidirectional

agent = Agent(tools=MCP("wss://api.example.com/mcp", auth_token="token"))

With environment variables

agent = Agent( tools=MCP( command="npx", args=["-y", "@modelcontextprotocol/server-brave-search"], env={"BRAVE_API_KEY": "your-key"} ) )`

📖 Full MCP docs — stdio, HTTP, WebSocket, SSE transports

4. Custom Tools

from praisonaiagents import Agent, tool

@tool def search(query: str) -> str: """Search the web for information.""" return f"Results for: {query}"

@tool def calculate(expression: str) -> float: """Evaluate a math expression.""" return eval(expression)

agent = Agent( instructions="You are a helpful assistant", tools=[search, calculate] ) agent.start("Search for AI news and calculate 154")`

📖 Full tools docs — BaseTool, tool packages, 100+ built-in tools

5. Persistence (Databases)

from praisonaiagents import Agent, db

agent = Agent( name="Assistant", db=db(database_url="postgresql://localhost/mydb"), session_id="my-session" ) agent.chat("Hello!") # Auto-persists messages, runs, traces`

📖 Full persistence docs — PostgreSQL, MySQL, SQLite, MongoDB, Redis, and 20+ more

6. PraisonAI Claw 🦞 (Dashboard UI)

Connect your AI agents to Telegram, Discord, Slack, WhatsApp and more — all from a single command.

pip install "praisonai[claw]" praisonai clawpip install "praisonai[claw]" praisonai clawOpen http://localhost:8082 — the dashboard comes with 13 built-in pages: Chat, Agents, Memory, Knowledge, Channels, Guardrails, Cron, and more. Add messaging channels directly from the UI.

📖 Full Claw docs — platform tokens, CLI options, Docker, and YAML agent mode

7. Langflow Integration 🔗 (Visual Flow Builder)

Build multi-agent workflows visually with drag-and-drop components in Langflow.

pip install "praisonai[flow]" praisonai flowpip install "praisonai[flow]" praisonai flowOpen http://localhost:7861 — use the Agent and Agent Team components to create sequential or parallel workflows. Connect Chat Input → Agent Team → Chat Output for instant multi-agent pipelines.

📖 Full Flow docs — visual agent building, component reference, and deployment

8. PraisonAI UI 🤖 (Clean Chat)

Lightweight chat interface for your AI agents.

pip install "praisonai[ui]" praisonai uipip install "praisonai[ui]" praisonai ui📄 Using YAML (No Code)

Example 1: Two Agents Working Together

Create agents.yaml:

framework: praisonai topic: "Write a blog post about AI"framework: praisonai topic: "Write a blog post about AI"agents: researcher: role: Research Analyst goal: Research AI trends and gather information instructions: "Find accurate information about AI trends"

writer: role: Content Writer goal: Write engaging blog posts instructions: "Write clear, engaging content based on research"`

Run with:

praisonai agents.yaml

The agents automatically work together sequentially

Example 2: Agent with Custom Tool

Create two files in the same folder:

agents.yaml:

framework: praisonai topic: "Calculate the sum of 25 and 15"framework: praisonai topic: "Calculate the sum of 25 and 15"agents: calculator_agent: role: Calculator goal: Perform calculations instructions: "Use the add_numbers tool to help with calculations" tools:

- add_numbers`

tools.py:

def add_numbers(a: float, b: float) -> float: """ Add two numbers together.def add_numbers(a: float, b: float) -> float: """ Add two numbers together.Args: a: First number b: Second number

Returns: The sum of a and b """ return a + b`

Run with:

praisonai agents.yaml

💡 Tips:

Use the function name (e.g., add_numbers) in the tools list, not the file name

Tools in tools.py are automatically discovered

The function's docstring helps the AI understand how to use it

🎯 CLI Quick Reference

Category Commands

Execution

praisonai, --auto, --interactive, --chat

Research

research, --query-rewrite, --deep-research

Planning

--planning, --planning-tools, --planning-reasoning

Workflows

workflow run, workflow list, workflow auto

Memory

memory show, memory add, memory search, memory clear

Knowledge

knowledge add, knowledge query, knowledge list

Sessions

session list, session resume, session delete

Tools

tools list, tools info, tools search

MCP

mcp list, mcp create, mcp enable

Development

commit, docs, checkpoint, hooks

Scheduling

schedule start, schedule list, schedule stop

📖 Full CLI reference

✨ Key Features

🤖 Core Agents

Feature Code Docs

Single Agent Example 📖

Multi Agents Example 📖

Auto Agents Example 📖

Self Reflection AI Agents Example 📖

Reasoning AI Agents Example 📖

Multi Modal AI Agents Example 📖

🔄 Workflows

Feature Code Docs

Simple Workflow Example 📖

Workflow with Agents Example 📖

Agentic Routing (route())

Example

📖

Parallel Execution (parallel())

Example

📖

Loop over List/CSV (loop())

Example

📖

Evaluator-Optimizer (repeat())

Example

📖

Conditional Steps Example 📖

Workflow Branching Example 📖

Workflow Early Stop Example 📖

Workflow Checkpoints Example 📖

💻 Code & Development

Feature Code Docs

Code Interpreter Agents Example 📖

AI Code Editing Tools Example 📖

External Agents (All) Example 📖

Claude Code CLI Example 📖

Gemini CLI Example 📖

Codex CLI Example 📖

Cursor CLI Example 📖

🧠 Memory & Knowledge

Feature Code Docs

Memory (Short & Long Term) Example 📖

File-Based Memory Example 📖

Claude Memory Tool Example 📖

Add Custom Knowledge Example 📖

RAG Agents Example 📖

Chat with PDF Agents Example 📖

Data Readers (PDF, DOCX, etc.) CLI 📖

Vector Store Selection CLI 📖

Retrieval Strategies CLI 📖

Rerankers CLI 📖

Index Types (Vector/Keyword/Hybrid) CLI 📖

Query Engines (Sub-Question, etc.) CLI 📖

🔬 Research & Intelligence

Feature Code Docs

Deep Research Agents Example 📖

Query Rewriter Agent Example 📖

Native Web Search Example 📖

Built-in Search Tools Example 📖

Unified Web Search Example 📖

Web Fetch (Anthropic) Example 📖

📋 Planning & Execution

Feature Code Docs

Planning Mode Example 📖

Planning Tools Example 📖

Planning Reasoning Example 📖

Prompt Chaining Example 📖

Evaluator Optimiser Example 📖

Orchestrator Workers Example 📖

👥 Specialized Agents

Feature Code Docs

Data Analyst Agent Example 📖

Finance Agent Example 📖

Shopping Agent Example 📖

Recommendation Agent Example 📖

Wikipedia Agent Example 📖

Programming Agent Example 📖

Math Agents Example 📖

Markdown Agent Example 📖

Prompt Expander Agent Example 📖

🎨 Media & Multimodal

Feature Code Docs

Image Generation Agent Example 📖

Image to Text Agent Example 📖

Video Agent Example 📖

Camera Integration Example 📖

🔌 Protocols & Integration

Feature Code Docs

MCP Transports Example 📖

WebSocket MCP Example 📖

MCP Security Example 📖

MCP Resumability Example 📖

MCP Config Management Docs 📖

LangChain Integrated Agents Example 📖

🛡️ Safety & Control

Feature Code Docs

Guardrails Example 📖

Human Approval Example 📖

Rules & Instructions Docs 📖

⚙️ Advanced Features

Feature Code Docs

Async & Parallel Processing Example 📖

Parallelisation Example 📖

Repetitive Agents Example 📖

Agent Handoffs Example 📖

Stateful Agents Example 📖

Autonomous Workflow Example 📖

Structured Output Agents Example 📖

Model Router Example 📖

Prompt Caching Example 📖

Fast Context Example 📖

🛠️ Tools & Configuration

Feature Code Docs

100+ Custom Tools Example 📖

YAML Configuration Example 📖

100+ LLM Support Example 📖

Callback Agents Example 📖

Hooks Example 📖

Middleware System Example 📖

Configurable Model Example 📖

Rate Limiter Example 📖

Injected Tool State Example 📖

Shadow Git Checkpoints Example 📖

Background Tasks Example 📖

Policy Engine Example 📖

Thinking Budgets Example 📖

Output Styles Example 📖

Context Compaction Example 📖

📊 Monitoring & Management

Feature Code Docs

Sessions Management Example 📖

Auto-Save Sessions Docs 📖

History in Context Docs 📖

Telemetry Example 📖

Project Docs (.praison/docs/) Docs 📖

AI Commit Messages Docs 📖

@Mentions in Prompts Docs 📖

🖥️ CLI Features

Feature Code Docs

Slash Commands Example 📖

Autonomy Modes Example 📖

Cost Tracking Example 📖

Repository Map Example 📖

Interactive TUI Example 📖

Git Integration Example 📖

Sandbox Execution Example 📖

CLI Compare Example 📖

Profile/Benchmark Docs 📖

Auto Mode Docs 📖

Init Docs 📖

File Input Docs 📖

Final Agent Docs 📖

Max Tokens Docs 📖

🧪 Evaluation

Feature Code Docs

Accuracy Evaluation Example 📖

Performance Evaluation Example 📖

Reliability Evaluation Example 📖

Criteria Evaluation Example 📖

🎯 Agent Skills

Feature Code Docs

Skills Management Example 📖

Custom Skills Example 📖

⏰ 24/7 Scheduling

Feature Code Docs

Agent Scheduler Example 📖

💻 Using JavaScript Code

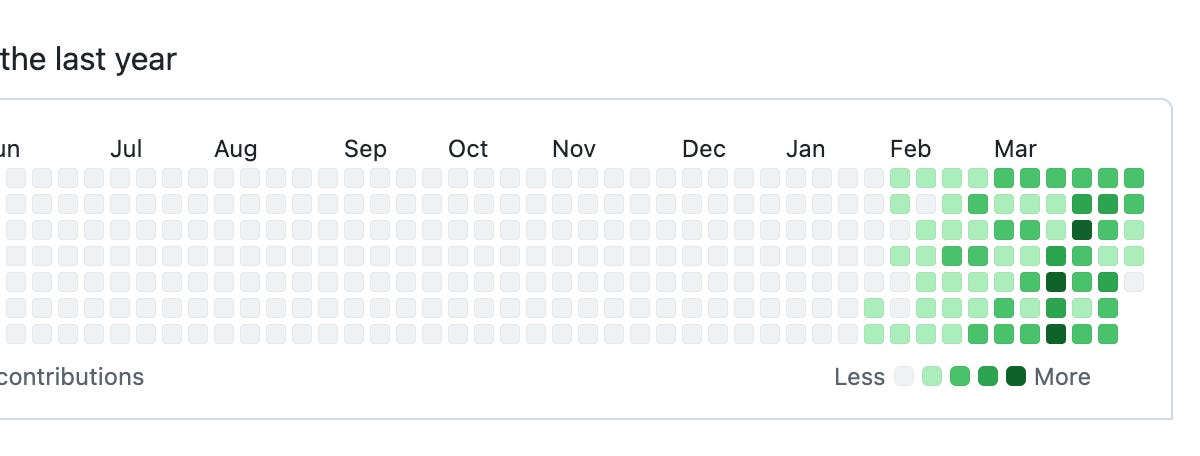

npm install praisonai export OPENAI_API_KEY=xxxxxxxxxxxxxxxxxxxxxxnpm install praisonai export OPENAI_API_KEY=xxxxxxxxxxxxxxxxxxxxxxconst { Agent } = require('praisonai'); const agent = new Agent({ instructions: 'You are a helpful AI assistant' }); agent.start('Write a movie script about a robot in Mars');const { Agent } = require('praisonai'); const agent = new Agent({ instructions: 'You are a helpful AI assistant' }); agent.start('Write a movie script about a robot in Mars');⭐ Star History

🎓 Video Tutorials

Learn PraisonAI through our comprehensive video series:

View all 22 video tutorials

Topic Video

AI Agents with Self Reflection

Reasoning Data Generating Agent

AI Agents with Reasoning

Multimodal AI Agents

AI Agents Workflow

Async AI Agents

Mini AI Agents

AI Agents with Memory

Repetitive Agents

Introduction

Tools Overview

Custom Tools

Firecrawl Integration

User Interface

Crawl4AI Integration

Chat Interface

Code Interface

Mem0 Integration

Training

Realtime Voice Interface

Call Interface

Reasoning Extract Agents

👥 Contributing

We welcome contributions! Fork the repo, create a branch, and submit a PR → Contributing Guide.

❓ FAQ & Troubleshooting

ModuleNotFoundError: No module named 'praisonaiagents'

Install the package:

pip install praisonaiagents

API key not found / Authentication error

Ensure your API key is set:

export OPENAI_API_KEY=your_key_here

For other providers, see Models docs.

How do I use a local model (Ollama)?

# Start Ollama server first ollama serve# Start Ollama server first ollama serveSet environment variable

export OPENAI_BASE_URL=http://localhost:11434/v1`

See Models docs for more details.

How do I persist conversations to a database?

Use the db parameter:

from praisonaiagents import Agent, db

agent = Agent( name="Assistant", db=db(database_url="postgresql://localhost/mydb"), session_id="my-session" )`

See Persistence docs for supported databases.

How do I enable agent memory?

from praisonaiagents import Agent

agent = Agent( name="Assistant", memory=True, # Enables file-based memory (no extra deps!) user_id="user123" )`

See Memory docs for more options.

How do I run multiple agents together?

from praisonaiagents import Agent, Agents

agent1 = Agent(instructions="Research topics") agent2 = Agent(instructions="Summarize findings") agents = Agents(agents=[agent1, agent2]) agents.start()`

See Agents docs for more examples.

How do I use MCP tools?

from praisonaiagents import Agent, MCP

agent = Agent( tools=MCP("npx @modelcontextprotocol/server-memory") )`

See MCP docs for all transport options.

Getting Help

-

📚 Full Documentation

-

🐛 Report Issues

-

💬 Discussions

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

githubtrendingopen-source

Sources: Mark Zuckerberg is back to writing code after a two-decade hiatus, submitting three diffs to Meta s monorepo, and is a heavy user of Claude Code CLI (Gergely Orosz/The Pragmatic Engineer)

Gergely Orosz / The Pragmatic Engineer : Sources: Mark Zuckerberg is back to writing code after a two-decade hiatus, submitting three diffs to Meta's monorepo, and is a heavy user of Claude Code CLI Mark Zuckerberg and Garry Tan join the trend of C-level folks jumping back into coding with AI. Also: a bad week for Claude Code and GitHub, and more

B70: Quick and Early Benchmarks & Backend Comparison

llama.cpp: f1f793ad0 (8657) This is a quick attempt to just get it up and running. Lots of oneapi runtime still using "stable" from Intels repo. Kernel 6.19.8+deb13-amd64 with an updated xe firmware built. Vulkan is Debian but using latest Mesa compiled from source. Openvino is 2026.0. Feels like everything is "barely on the brink of working" (which is to be expected). sycl: $ build/bin/llama-bench -hf unsloth/Qwen3.5-27B-GGUF:UD-Q4_K_XL -p 512,16384 -n 128,512 | model | size | params | backend | ngl | test | t/s | | ------------------------------ | ---------: | ---------: | ---------- | --: | --------------: | -------------------: | | qwen35 27B Q4_K - Medium | 16.40 GiB | 26.90 B | SYCL | 99 | pp512 | 798.07 ± 2.72 | | qwen35 27B Q4_K - Medium | 16.40 GiB | 26.90 B | SYCL | 99 | pp16384

Distributed 1-bit LLM inference over P2P - 50 nodes validated, 100% shard discovery, CPU-only

There are roughly 4 billion CPUs on Earth. Most of them sit idle 70% of the time. Meanwhile, the AI industry is burning $100B+ per year on GPU clusters to run models that 95% of real-world tasks don't actually need. ARIA Protocol is an attempt to flip that equation. It's a peer-to-peer distributed inference system built specifically for 1-bit quantized models (ternary weights: -1, 0, +1). No GPU. No cloud. No central server. Nodes discover each other over a Kademlia DHT, shard model layers across contributors, and pipeline inference across the network. Think Petals meets BitNet, minus the GPU requirement. This isn't Ollama or llama.cpp — those are great tools, but they're single-machine. ARIA distributes inference across multiple CPUs over the internet so that no single node needs to hold

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

More in Open Source AI

B70: Quick and Early Benchmarks & Backend Comparison

llama.cpp: f1f793ad0 (8657) This is a quick attempt to just get it up and running. Lots of oneapi runtime still using "stable" from Intels repo. Kernel 6.19.8+deb13-amd64 with an updated xe firmware built. Vulkan is Debian but using latest Mesa compiled from source. Openvino is 2026.0. Feels like everything is "barely on the brink of working" (which is to be expected). sycl: $ build/bin/llama-bench -hf unsloth/Qwen3.5-27B-GGUF:UD-Q4_K_XL -p 512,16384 -n 128,512 | model | size | params | backend | ngl | test | t/s | | ------------------------------ | ---------: | ---------: | ---------- | --: | --------------: | -------------------: | | qwen35 27B Q4_K - Medium | 16.40 GiB | 26.90 B | SYCL | 99 | pp512 | 798.07 ± 2.72 | | qwen35 27B Q4_K - Medium | 16.40 GiB | 26.90 B | SYCL | 99 | pp16384

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!