Claude Code's Leaked Source: A Real-World Masterclass in Harness Engineering

Hey there, little explorer! 👋

Imagine you have a super-smart robot friend, like a toy robot that can talk! 🤖

This news is like finding out how to build the best playground for your robot friend. It's not just about teaching the robot to talk (that's the "model"), but also making sure it has comfy swings, a safe slide, and yummy snacks so it can play all day without getting tired or lost! 🥪

They call this "harness engineering." It's all the clever tricks and tools that help the robot work super well, like remembering what you said, not getting confused, and staying safe. Someone found the secret plans for one robot friend, Claude, and it showed all these cool playground ideas! It's like finding a treasure map for building the best robot playground ever! ✨

<p>Earlier this year, Mitchell Hashimoto coined the term "harness engineering" — the discipline of building everything <em>around</em> the model that makes an AI agent actually work in production. OpenAI wrote about it. Anthropic published guides. Martin Fowler analyzed it.</p> <p>Then Claude Code's source leaked. 512K lines of TypeScript. And suddenly we have the first real look at what production harness engineering looks like at scale.</p> <h2> The Evolution: From Prompt to Harness </h2> <p>The AI engineering discipline has shifted rapidly:<br> </p> <div class="highlight js-code-highlight"> <pre class="highlight plaintext"><code>2023-2024: Prompt Engineering → "How to ask the model" 2025: Context Engineering → "What information to feed the model" 2026: Harness Engineering → "How the ent

Earlier this year, Mitchell Hashimoto coined the term "harness engineering" â the discipline of building everything around the model that makes an AI agent actually work in production. OpenAI wrote about it. Anthropic published guides. Martin Fowler analyzed it.

After studying Claude Code's leaked source â particularly its memory system, caching architecture, and security layers â the harness turns out to be far more interesting than the LLM calls themselves.

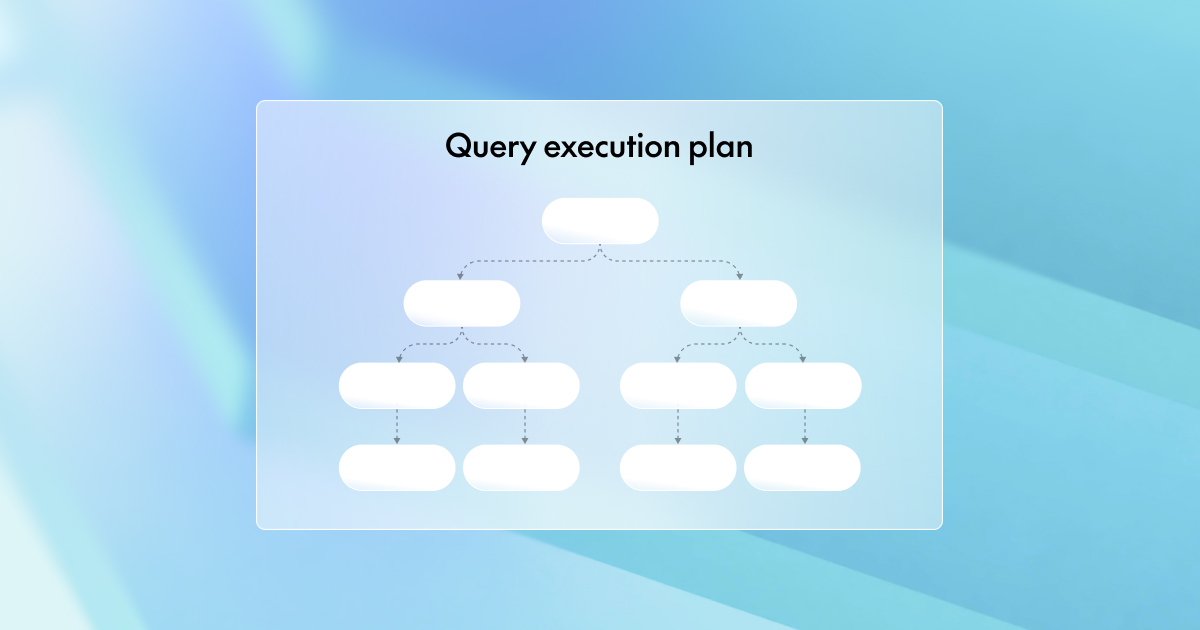

The Evolution: From Prompt to Harness

The AI engineering discipline has shifted rapidly:

2023-2024: Prompt Engineering â "How to ask the model" 2025: Context Engineering â "What information to feed the model" 2026: Harness Engineering â "How the entire system runs around the model"2023-2024: Prompt Engineering â "How to ask the model" 2025: Context Engineering â "What information to feed the model" 2026: Harness Engineering â "How the entire system runs around the model"Enter fullscreen mode

Exit fullscreen mode

Prompt engineering is the question. Context engineering is the blueprint. Harness engineering is the construction site â tools, permissions, safety checks, cost controls, feedback loops, and state management that let the agent operate reliably.

The leaked Claude Code source is a concrete case study for each of these harness layers.

Prompt Cache Economics: A Cost Center, Not an Optimization

One of the most revealing modules is promptCacheBreakDetection.ts. It tracks 14 distinct cache invalidation vectors and uses "sticky latches" â mechanisms that prevent mode switches from breaking cached prompt prefixes.

âââââââââââââââââââââââââââââââââââââââââââââââââââ â Prompt Cache Layer â â â â âââââââââââ âââââââââââ âââââââââââ â â â Vector 1â â Vector 2â âVector 14â ... â â â Mode â â Tool â â Context â â â â Switch â â Change â â Rotate â â â ââââââ¬âââââ ââââââ¬âââââ ââââââ¬âââââ â â â â â â â â¼ â¼ â¼ â â ââââââââââââââââââââââââââââââââââââââââ â â â Sticky Latch Layer â â â â "Hold current prefix until forced" â â â ââââââââââââââââââââââââ¬ââââââââââââââââ â â â â â â¼ â â âââââââââââââââââââ â â â Cache Decision â â â â KEEP / BREAK â â â âââââââââââââââââââ â ââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââ â Prompt Cache Layer â â â â âââââââââââ âââââââââââ âââââââââââ â â â Vector 1â â Vector 2â âVector 14â ... â â â Mode â â Tool â â Context â â â â Switch â â Change â â Rotate â â â ââââââ¬âââââ ââââââ¬âââââ ââââââ¬âââââ â â â â â â â â¼ â¼ â¼ â â ââââââââââââââââââââââââââââââââââââââââ â â â Sticky Latch Layer â â â â "Hold current prefix until forced" â â â ââââââââââââââââââââââââ¬ââââââââââââââââ â â â â â â¼ â â âââââââââââââââââââ â â â Cache Decision â â â â KEEP / BREAK â â â âââââââââââââââââââ â âââââââââââââââââââââââââââââââââââââââââââââââââââEnter fullscreen mode

Exit fullscreen mode

This reframes prompt caching from a performance trick into a billing optimization problem. At scale, each cache miss is real money. The code treats cache management with the same rigor as database query planning â monitoring invalidation patterns, measuring hit rates, and making explicit keep-or-break decisions.

The takeaway for agent builders: if your agent makes repeated API calls (and it does), prompt caching is not optional â it's a cost center that needs active management.

Multi-Agent Coordination: The Prompt IS the Harness

Claude Code's sub-agent system is internally called "swarms." The surprising part: coordination between agents is not handled by a state machine, a DAG executor, or an orchestration framework. It's done through natural language prompts.

âââââââââââââââââââââââââââââââââââââââââââââ â Main Agent (Orchestrator) â â â â System prompt includes: â â - "Do not rubber-stamp weak work" â â - Tool permission boundaries â â - Task decomposition strategy â â â â ââââââââââââ¼âââââââââââ â â â¼ â¼ â¼ â â âââââââââââ âââââââââââ âââââââââââ â â â Sub- â â Sub- â â Sub- â â â â Agent A â â Agent B â â Agent C â â â â â â â â â â â â Isolatedâ â Isolatedâ â Isolatedâ â â â Context â â Context â â Context â â â â Scoped â â Scoped â â Scoped â â â â Tools â â Tools â â Tools â â â âââââââââââ âââââââââââ âââââââââââ â ââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââ â Main Agent (Orchestrator) â â â â System prompt includes: â â - "Do not rubber-stamp weak work" â â - Tool permission boundaries â â - Task decomposition strategy â â â â ââââââââââââ¼âââââââââââ â â â¼ â¼ â¼ â â âââââââââââ âââââââââââ âââââââââââ â â â Sub- â â Sub- â â Sub- â â â â Agent A â â Agent B â â Agent C â â â â â â â â â â â â Isolatedâ â Isolatedâ â Isolatedâ â â â Context â â Context â â Context â â â â Scoped â â Scoped â â Scoped â â â â Tools â â Tools â â Tools â â â âââââââââââ âââââââââââ âââââââââââ â âââââââââââââââââââââââââââââââââââââââââââââEnter fullscreen mode

Exit fullscreen mode

Each sub-agent runs in an isolated context with specific tool permissions. The orchestrator coordinates them through instructions embedded in prompts â quality standards, scope boundaries, conflict resolution rules. All in natural language.

This is a strong signal: for LLM-based multi-agent systems, traditional orchestration frameworks may add unnecessary complexity. The model already understands natural language instructions. Why build a state machine when a well-written prompt can express the same coordination logic?

Memory and State: Progressive Disclosure at Every Layer

One of the more practical patterns in the codebase is the file-based memory system with progressive disclosure.

The design uses a two-tier structure:

ââââââââââââââââââââââââââââââââââââââââââââââââ â Memory Architecture â â â â Tier 1: Index (always loaded) â â ââââââââââââââââââââââââââââââââââââââââ â â â MEMORY.md â â â â â â â â - [User role](user_role.md) â ... â â â â - [Testing](feedback_test.md) â ... â â â â - [Auth rewrite](project_auth.md) â â â â â â â â Cost: ~200 tokens (one-line hooks) â â â ââââââââââââââââââââââââââââââââââââââââ â â â â Tier 2: Full content (loaded on demand) â â ââââââââââââââ ââââââââââââââ ââââââââââââ â â âuser_role.mdâ âfeedback_ â âproject_ â â â â â âtest.md â âauth.md â â â â Full user â â Full test â â Full â â â â context â â guidelines â â context â â â â (~500 tok) â â (~300 tok) â â(~400 tok)â â â ââââââââââââââ ââââââââââââââ ââââââââââââ â â â â Only fetched when the index line matches â â the current task context â ââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââ â Memory Architecture â â â â Tier 1: Index (always loaded) â â ââââââââââââââââââââââââââââââââââââââââ â â â MEMORY.md â â â â â â â â - [User role](user_role.md) â ... â â â â - [Testing](feedback_test.md) â ... â â â â - [Auth rewrite](project_auth.md) â â â â â â â â Cost: ~200 tokens (one-line hooks) â â â ââââââââââââââââââââââââââââââââââââââââ â â â â Tier 2: Full content (loaded on demand) â â ââââââââââââââ ââââââââââââââ ââââââââââââ â â âuser_role.mdâ âfeedback_ â âproject_ â â â â â âtest.md â âauth.md â â â â Full user â â Full test â â Full â â â â context â â guidelines â â context â â â â (~500 tok) â â (~300 tok) â â(~400 tok)â â â ââââââââââââââ ââââââââââââââ ââââââââââââ â â â â Only fetched when the index line matches â â the current task context â ââââââââââââââââââââââââââââââââââââââââââââââââEnter fullscreen mode

Exit fullscreen mode

The index (MEMORY.md) is always loaded into the context window â cheap, at ~200 tokens. Full memory files are only fetched when a one-line hook in the index matches the current task. This keeps the context window lean while still giving the agent access to rich historical context when needed.

This is essentially the same pattern as database indexing: maintain a small, fast lookup structure that points to larger data. Applied to LLM context windows, it's a practical solution to the "agents forget everything between sessions" problem without paying the token cost of loading everything upfront.

The memory system also categorizes memories by type â user preferences, feedback corrections, project context, external references â each with different update and retrieval patterns. This is more sophisticated than a flat memory store and mirrors how humans organize knowledge.

Security: 23 Checks and Adversarial Hardening

Every bash command execution passes through 23 security checks. This is not a theoretical threat model â it's the result of real-world adversarial usage.

The defenses include:

-

Zero-width character injection â invisible Unicode characters that can alter command semantics

-

Zsh expansion tricks â shell-specific syntax that can escape sandboxes

-

Native client authentication â a DRM-style mechanism where the Zig HTTP layer computes a hash (cch=56670 placeholder replaced at transport time) to verify client legitimacy

User Input â â¼ ââââââââââââââââââââââââ â 23 Security Checks â â â â ⢠Zero-width chars â â ⢠Zsh expansion â â ⢠Path traversal â â ⢠Injection patternsâ â ⢠...19 more â â â ââââââââââââ¬ââââââââââââ â PASS? â âââââââ´ââââââ â â YES NO â â â¼ â¼ Execute Block + Command Log EventUser Input â â¼ ââââââââââââââââââââââââ â 23 Security Checks â â â â ⢠Zero-width chars â â ⢠Zsh expansion â â ⢠Path traversal â â ⢠Injection patternsâ â ⢠...19 more â â â ââââââââââââ¬ââââââââââââ â PASS? â âââââââ´ââââââ â â YES NO â â â¼ â¼ Execute Block + Command Log EventEnter fullscreen mode

Exit fullscreen mode

The lesson: agent security is not just sandboxing. It's adversarial input hardening at every boundary. If an agent can execute shell commands, assume someone will try to make it execute the wrong ones â intentionally or not.

When NOT to Call the Model

Claude Code detects user frustration using regex, not LLM inference.

Patterns like "wtf", "so frustrating", "this is broken" are matched via simple pattern rules and trigger tone adjustments in subsequent responses. No API call needed.

This sounds almost trivially simple. But it embodies a core harness engineering principle: use the cheapest, fastest tool that solves the problem.

The codebase applies this principle consistently:

Task Solution Why not LLM?

Frustration detection Regex Fast, free, reliable enough

Terminal rendering React + Ink with Int32Array buffers Rendering is a solved problem

Cache invalidation tracking Dedicated TS module Deterministic logic, no ambiguity

Client auth Zig HTTP layer hash Security must be deterministic

LLM calls are reserved for tasks that genuinely require language understanding. Everything else uses conventional engineering. A $0 regex beats a $0.01 model call when accuracy is comparable.

The Rendering Layer: Game Engine Meets Terminal

An unexpected finding: the CLI terminal interface is built with React + Ink and uses game-engine-style rendering optimizations.

The implementation uses Int32Array buffers and patch-based updates â similar to how game engines minimize draw calls by only updating changed pixels. The team claims this achieves ~50x fewer stringWidth calls during token streaming.

This makes sense when you think about it. A terminal UI streaming LLM output has similar challenges to a game render loop: frequent partial updates, variable-length content, frame-rate sensitivity. The harness applies domain-appropriate engineering rather than treating the terminal as an afterthought.

The Big Picture: Model as Commodity, Harness as Moat

The leaked source paints a clear picture of where the real engineering effort lives in a production AI agent:

âââââââââââââââââââââââââââââââââââââââââââââââââââ â Agent Harness â â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â Cache â â Security â â Tool Orchestrationâ â â â Economicsâ â Hardeningâ â & Permissions â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â Memory & â â State â â Multi-Agent â â â â Retrievalâ â Persist â â Coordination â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â Cost â â UI/UX â â Observability â â â â Control â â Renderingâ â & Logging â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â â ââââââââââââââââ â â â LLM API â â â â (the easy â â â â part) â â â ââââââââââââââââ â â â ââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââââ â Agent Harness â â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â Cache â â Security â â Tool Orchestrationâ â â â Economicsâ â Hardeningâ â & Permissions â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â Memory & â â State â â Multi-Agent â â â â Retrievalâ â Persist â â Coordination â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â Cost â â UI/UX â â Observability â â â â Control â â Renderingâ â & Logging â â â ââââââââââââ ââââââââââââ ââââââââââââââââââââ â â â â ââââââââââââââââ â â â LLM API â â â â (the easy â â â â part) â â â ââââââââââââââââ â â â âââââââââââââââââââââââââââââââââââââââââââââââââââEnter fullscreen mode

Exit fullscreen mode

The LLM API call is the smallest box. Everything around it â caching, memory, security, cost control, rendering, coordination â is the actual product.

For anyone building AI agents: the model selection matters less than you think. The harness is where the engineering lives â and where the differentiation happens.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!