AlphaCode 3 Improves Itself: Self-Play Training Achieves Competitive Programming Gold

Hi there, little explorer! Guess what? We have some super cool news about a robot brain named AlphaCode 3!

Imagine you have a toy car, and you want it to go faster. Instead of asking grown-ups for help, your car itself tries different ways to go fast. It tries turning the wheels this way, then that way, and sees what works best!

AlphaCode 3 is like that car! It's a computer program that learns to solve puzzles, like building with LEGOs, but with computer code. It doesn't need people to teach it every single step.

It plays a game all by itself, trying to solve puzzles. If it makes a mistake, it learns from it! It's like it says, "Oops, that didn't work! Let me try something else!"

And guess what? It got so good, it won a gold medal! That means it's super, super smart at solving these code puzzles, almost like a superhero! Isn't that amazing?

DeepMind's AlphaCode 3 uses self-play and automated test generation to continuously improve its coding capabilities, reaching gold medal performance on Codeforces without human-labeled training data.

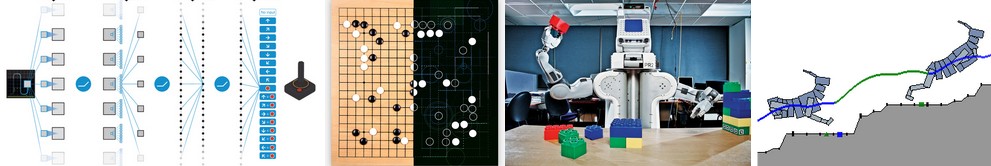

DeepMind has published research on AlphaCode 3, a coding AI system that achieves gold medal performance on competitive programming platforms through a novel self-improvement paradigm. Unlike previous systems that relied on human-labeled training data, AlphaCode 3 generates its own training signal through automated test case generation and self-play.

The system works by generating candidate solutions to programming problems, automatically generating test cases to evaluate these solutions, and using the results to refine its approach. This self-play loop allows the system to identify its own weaknesses and generate targeted training examples to address them.

On Codeforces, one of the world's most competitive programming platforms, AlphaCode 3 achieved a rating equivalent to a gold medalist—placing it in the top 0.1% of human competitors. Particularly impressive was its performance on novel problem types not represented in its training data, suggesting genuine algorithmic reasoning rather than pattern matching.

The research raises important questions about the potential for AI systems to improve themselves without human supervision. While the current system is limited to the well-defined domain of competitive programming, the underlying self-improvement paradigm could potentially be applied to other domains.

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

Self-Evolving AIAlphaCodeSelf-Play

Collaboration and Credit Principles

Many significant machine learning breakthroughs, including projects like TensorFlow and AlphaGo, are the result of large-scale collaborations involving numerous researchers. These extensive partnerships, often comprising over 20 individuals, are fundamentally enabled by mutual goodwill and trust within the research community. This collaborative spirit is crucial for advancing the field.

Deep Reinforcement Learning: Pong from Pixels

Deep Reinforcement Learning (DRL) allows computers to learn complex tasks directly from raw data, famously mastering games like Pong from pixels. This technology has also defeated world champions in Go, showcasing advanced problem-solving abilities. These achievements highlight DRL's significant potential for autonomous learning and diverse real-world applications.

Short Story on AI: A Cognitive Discontinuity.

An author is releasing a new short story collection, featuring "A Cognitive Discontinuity" as its lead piece. This story extrapolates from current supervised learning to envision the future of Artificial Intelligence. It explores the profound implications of present technological advancements and their potential to shape AI's evolution.

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!