AI models fail at robot control without human-designed building blocks but agentic scaffolding closes the gap

A new framework from Nvidia, UC Berkeley, and Stanford systematically tests how well AI models can control robots through code. The findings: without human-designed abstractions, even top models fail, but methods like targeted test-time compute scaling closes the gap. The article AI models fail at robot control without human-designed building blocks but agentic scaffolding closes the gap appeared first on The Decoder .

Skip to content

Apr 2, 2026

Nvidia

A new framework from Nvidia, UC Berkeley, and Stanford systematically tests how well AI models can control robots through code. The findings: without human-designed abstractions, even top models fail, but methods like targeted test-time compute scaling closes the gap.

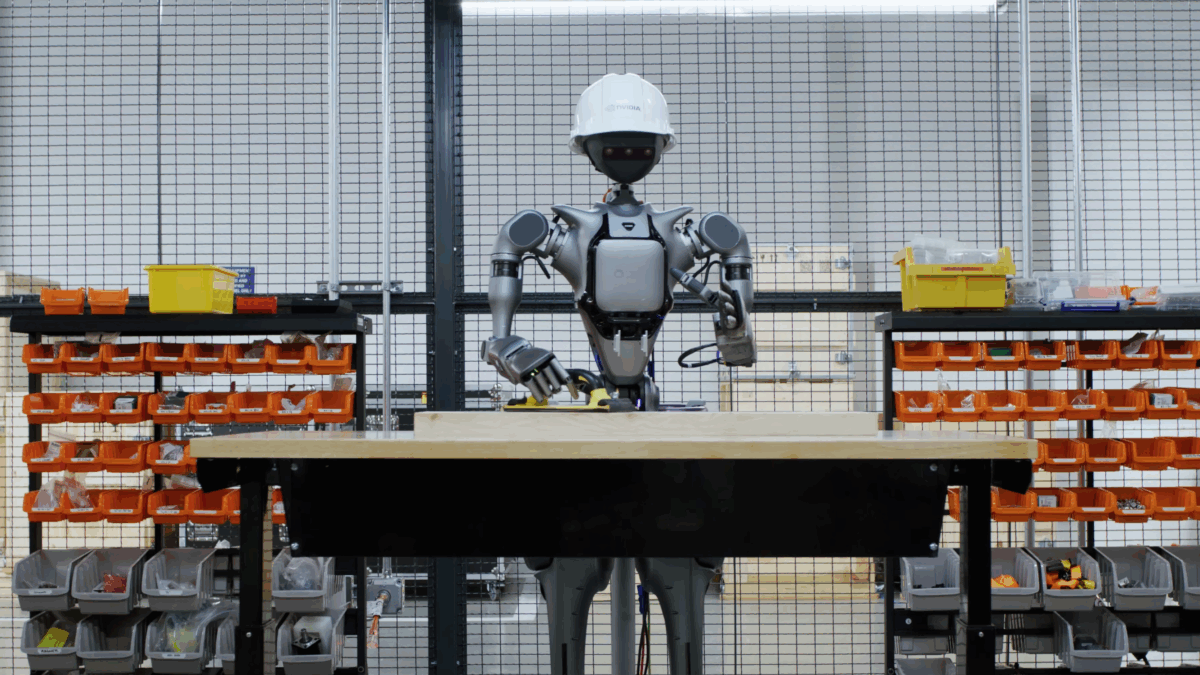

Researchers from Nvidia, UC Berkeley, Stanford, and Carnegie Mellon have released CaP-X, an open-access framework that systematically evaluates how well AI coding agents can control robots through self-written programs. The bottom line: none of the twelve frontier models tested can match the reliability of human-written programs in a single attempt.

The core idea behind the paper is straightforward: instead of training robot-specific models on massive motion datasets, general-purpose language models write the control code. To make this work, the researchers adapted techniques that have proven effective for language models and applied them to robotics - reinforcement learning with verifiable rewards from physics simulations, test-time compute scaling through parallel solution generation and self-correction, and agentic patterns like automated debugging and accumulating reusable functions, similar to what software engineering agents already do.

The team tested models including Gemini-3-Pro, GPT-5.2, Claude Opus 4.5, and several open-source models like Qwen3-235B and DeepSeek-V3.1 across seven manipulation tasks, ranging from simple cube lifting to bimanual coordination.

Even the strongest models tested fail at most robotics tasks when working without high-level abstractions.

Performance varies dramatically depending on what building blocks the models have access to. When given pre-built commands like "grasp object X and lift it," they only need to arrange the right sequence. But when those convenience functions are replaced with the underlying low-level steps - image segmentation, depth processing, grasp planning, and inverse kinematics - success rates drop sharply. The models then have to correctly combine dozens of lines of code where a single function call used to suffice.

Feeding camera images directly to models hurts performance

Interestingly, feeding raw camera images directly into the models' context actually makes results worse. The researchers suspect a gap in cross-modal alignment - foundation models are rarely trained to reason simultaneously about software code and physical robot execution.

What works better is an intermediate "Visual Differencing Module." A separate vision-language model first describes the scene in text, extracts task-relevant properties, and after each execution step, reports what changed in the image and whether the task is complete. This structured text then serves as the coding agent's basis for generating the next round of code. The approach consistently outperforms both raw console outputs and direct image inputs.

A training-free agent can match human-level performance

Building on these findings, the researchers developed CaP-Agent0, a training-free system with three core components. First, the Visual Differencing Module described above, which provides a textual status report after each step. Second, an automatically generated function library: the system collects helper functions from successful runs - things like coordinate transformations or grasp pose filtering - and makes them available for future tasks. Third, parallel code generation: nine solution candidates are generated simultaneously, either from a single model at different temperatures or distributed across Gemini-3-Pro, GPT-5.2, and Claude Opus 4.5. A supervisory agent then synthesizes the candidates into a final solution.

Some of these ideas trace back to Voyager, a Minecraft agent from the team around co-author Jim Fan, director of robotics at Nvidia and co-lead of the company's GEAR Lab, which is also responsible for Nvidia's Gr00t models.

The model breaks a high-level task (take the elevator down) into subtasks automatically (press the button, lower the arm, drive into the elevator), using programming and math tools to execute the button press. | Video: https://capgym.github.io/

Despite relying entirely on low-level building blocks, CaP-Agent0 matches or beats human-written code on four of seven tasks. The researchers also benchmarked the system against trained Vision-Language-Action models (VLAs), which control robots through learned motion patterns from large demonstration datasets rather than code. On the LIBERO-PRO benchmark, which tests tasks with altered object positions and rephrased instructions, CaP-Agent0 performed similarly to Physical Intelligence's VLA model pi0.5 on position changes.

When task descriptions were rephrased, CaP-Agent0 proved significantly more robust, according to the team, because it interprets instructions directly instead of depending on a specific training distribution.

Reinforcement learning gives a small model a major boost

Beyond the training-free CaP-Agent0, the framework also introduces CaP-RL, a method for improving language models at robot control through reinforcement learning. The model is trained using reward signals from physics simulations: when generated code produces a successful robot movement, the model receives positive feedback.

A Qwen2.5-Coder-7B model trained this way improved its success rate on cube stacking from 4 to 44 percent in simulation. On a real Franka robot, the same model hit 76 percent without any additional fine-tuning, because it optimizes through abstract programming interfaces rather than camera images. The visual gap between simulation and reality barely matters as a result.

The team proposes a hybrid systems where coding agents handle high-level task logic and recovery while specialized vision-language-action policies handle the fine-grained motor control. The full CaP-X framework - including CaP-Gym, CaP-Bench, CaP-Agent0, and CaP-RL - is available as an open-access platform for the research community.

AI News Without the Hype – Curated by Humans

As a THE DECODER subscriber, you get ad-free reading, our weekly AI newsletter, the exclusive "AI Radar" Frontier Report 6× per year, access to comments, and our complete archive.

Subscribe now

-

More than 16% discount.

-

Read without distractions – no Google ads.

-

Access to comments and community discussions.

-

Weekly AI newsletter.

-

6 times a year: “AI Radar” – deep dives on key AI topics.

-

Up to 25 % off on KI Pro online events.

-

Access to our full ten-year archive.

-

Get the latest AI news from The Decoder.

Subscribe to The Decoder

Sign in to highlight and annotate this article

Conversation starters

Daily AI Digest

Get the top 5 AI stories delivered to your inbox every morning.

More about

modelagenticagent

How I Replaced 6 Paid AI Subscriptions With One Free Tool (Saved $86/Month)

I was paying $86/month for AI tools. Then I found one free platform that replaced all of them. Here's the exact breakdown: The Tools I Cancelled Tool Cost What I Replaced It With ChatGPT Plus $20/mo Free GPT-4o on Kelora Otter.ai $17/mo Free audio transcription Jasper $49/mo Free AI text tools Total $86/mo $0 GPT-4o — Free Kelora gives direct access to GPT-4o, the same model inside ChatGPT Plus. No subscription, no credit card. I use it daily for code reviews, email drafts, and research summaries. Audio Transcription — Free Upload any audio file — meeting recordings, lectures, podcasts — and get accurate text back in seconds. Replaced my Otter.ai subscription instantly. AI Writing — Free Blog drafts, product copy, social posts. The text tools cover everything Jasper did for me at $49/month

Own Your Data: The Wake-Up Call

Data plays a critical part in our lives. And with the rapid changes driven by the recent evolution of AI, owning your data is no longer optional! First , we need to answer the following question: "Is your data really safe?" On April 1st, 2026 , an article was published on the Proton blog revealing that Big Tech companies have shared data from 6.9 million user accounts with US authorities over the past decade. Read the full Proton research for more details. Read google's transparency report for user data requests for more details. On January 1st, 2026 , Google published its AI Training Data Transparency Summary it contains the following: This is Google basically saying: "We use your data to train our AI models, but trust us, we're careful about it." On November 24, 2025 , Al Jazeera publish

Claude Code subagent patterns: how to break big tasks into bounded scopes

Claude Code Subagent Patterns: How to Break Big Tasks into Bounded Scopes If you've ever given Claude Code a massive task — "refactor the entire auth system" — and watched it spiral into confusion after 20 minutes, you've hit the core problem: unbounded scope kills context . The solution is subagent patterns: structured ways to decompose work into bounded, parallelizable units. Why Big Tasks Fail in Claude Code Claude Code has a finite context window. When you give it a large task: It reads lots of files → context fills up It loses track of what it read first It starts making contradictory changes You hit the context limit mid-task The session crashes and you lose progress The fix isn't a bigger context window — it's smaller tasks. The Subagent Pattern Instead of one Claude session doing e

Knowledge Map

Connected Articles — Knowledge Graph

This article is connected to other articles through shared AI topics and tags.

Discussion

Sign in to join the discussion

No comments yet — be the first to share your thoughts!